Inspect

Share

Inspect

Open-source framework for evaluating language models. Measure AI performance, reasoning, and behavior with structured, reproducible tests.

General Information about Inspect

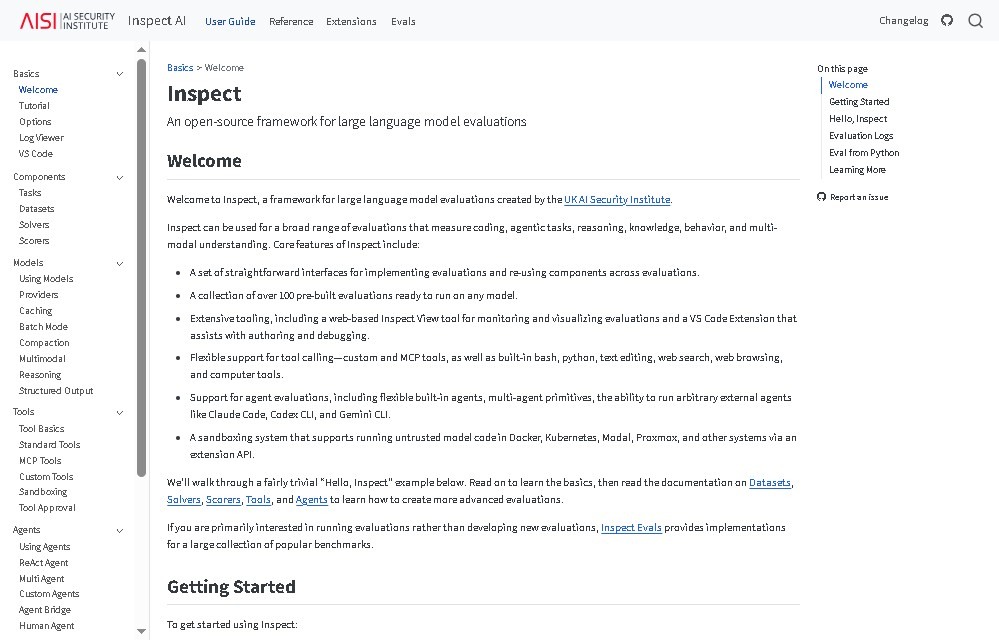

Inspect is an open-source framework designed specifically for language model evaluation (LLMs). Developed by the UK AI Security Institute, this tool provides researchers, developers, and security specialists with a technical and structured environment to reproducibly measure the quality, behavior, and capabilities of various artificial intelligence systems. Its fundamental goal is to offer a reliable standard for analyzing how models respond to complex tasks and demanding environments.

The primary function of Inspect is to facilitate the creation, execution, and visualization of performance benchmarks. Through its architecture, it allows for the evaluation of critical dimensions such as logical reasoning, specialized knowledge, programming task resolution, and multimodal understanding. It is a comprehensive solution for those who need to validate the effectiveness of a model or an AI agent before its integration into production environments or its commercial launch.

Among its most notable technical and functional capabilities are:

- Access to a collection of over 100 pre-built evaluations that can be run immediately on any compatible model.

- Flexible interfaces to easily implement new evaluation metrics and custom tasks according to project needs.

- Advanced support for agent evaluation and chain-of-task workflows, allowing for the analysis of autonomous behaviors and model thought processes.

- Automated response evaluation functionality, which significantly optimizes analysis time for large volumes of data.

- Integrated visual tools for log monitoring and results, accessible directly from the browser or through a dedicated VS Code extension.

At an operational level, Inspect is installed as a Python package, allowing it to be used on any development computer or server via the command line. The typical workflow consists of defining a set of evaluative tasks (datasets, prompts, and scoring criteria), running these tests against target models—such as GPT-4o, Claude, or Llama—and processing the results to detect biases, errors, or areas for improvement.

This framework is especially useful for AI evaluators and data scientists seeking rigor and transparency in their validation processes. By using Inspect, technical teams can perform direct comparisons between different models under the same experimental conditions, ensuring that results are consistent and auditable. Its technical and neutral approach positions it as an essential tool for language model auditing and the advancement of safety in generative artificial intelligence.

Features and Use Cases of Inspect

How Inspect Works

Frequently Asked Questions about Inspect

What exactly is Inspect?

Inspect is an open-source framework designed to evaluate the performance and behavior of language models in a structured manner.

Who developed the Inspect framework?

The tool was created by the UK AI Safety Institute to support the work of AI researchers and developers.

Is there a cost to use Inspect?

The software is completely free because it is open source, though you will need to pay for commercial model API usage if you choose to use them.

How do I install Inspect on my computer?

It is installed as a Python package using the command pip install inspect-ai and allows you to run evaluations from the terminal or through scripts.

What kinds of tasks can be evaluated with Inspect?

You can measure capabilities such as logical reasoning, general knowledge, coding, and multimodal content understanding.

Does Inspect include ready-to-use tests?

Yes, the system offers a collection of over a hundred pre-built evaluations that you can apply immediately to any compatible model.

Can I visualize test results graphically?

Inspect features built-in visual tools to analyze results from a web browser or through a dedicated VS Code extension.

Is it possible to evaluate AI agents with this tool?

Yes, the framework provides specific support for evaluating agents, task chains, and the automated scoring of model-generated responses.

Which language models can I analyze with Inspect?

It is compatible with a wide range of models, including GPT-4, Claude, Llama, and Gemini, provided the necessary credentials are configured.

Inspect Pricing

Open-Source Version: Free

- Full access to the open-source framework for large language model (LLM) evaluation.

- A collection of over 100 pre-built evaluations available for any model.

- Visual tools for monitoring and analyzing results via browser or VS Code extension.

- Interfaces for implementing custom evaluations (reasoning, knowledge, coding, etc.).

- Support for agent evaluation, chained tasks, and automated response grading.

- Execution available via Python or command line.

- Restriction: Does not include the cost of third-party provider APIs (OpenAI, Anthropic, Google, etc.) required to run the models being

Inspect Screenshots